This put the industry at a fork in the road. Rebuild is measured in days, and the risk of another drive failing, which could result in data loss through bad blocks, is on the threshold of unacceptable. RAID 6, the dual-parity approach, took a major hit with the release of 4TB and larger drives.

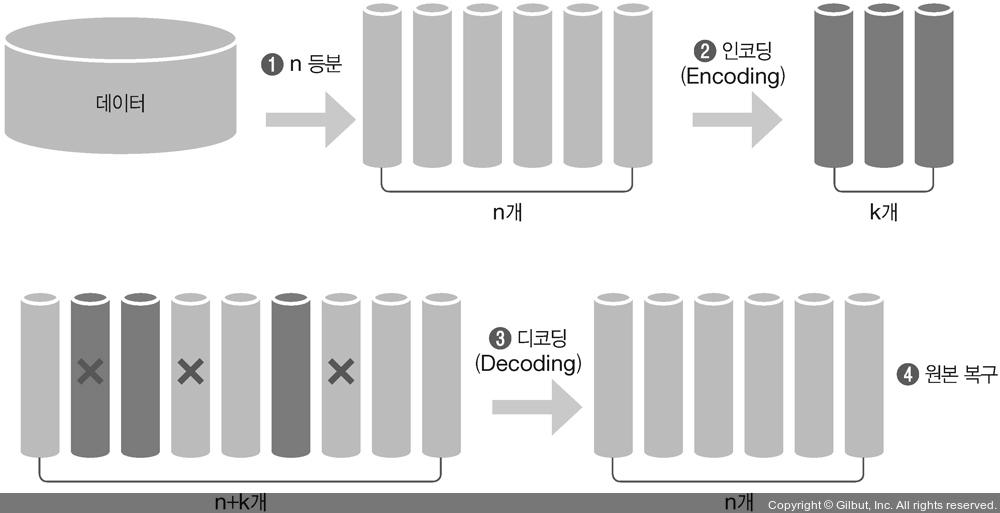

Again, recovery involves reading one of the parities and all of the remaining data, and can take a long while. This increases capacity usage to two sevenths or thereabouts in typical arrays. In response to potential data loss risks, the industry created a dual-parity approach, where two non-overlapping parities are created for drive set. The loss of another drive, or a bad block on any drive, will cause data loss. Parity recovery takes much longer to do, since all the data on all the drives in the set has to be read to allow generation of the missing blocks. This is easy enough with mirroring, but there is the risk of having a defect in the billions of blocks on the good drive that causes an unrecoverable data loss. The next step involves copying data from good drives to the failed drive. Typically an array has a spare drive or two for this contingency, or else the failed drive has to be replaced first. When a drive fails, things start to get a bit rough. Writing an updated block involves two drive write operations, and parity may require blocks from all the drives in a set to be read. Mirroring obviously doubles data size, while parity typically adds one-fifth more data, though it is dependent on how many drives are in a set. Both processes described above increase the amount of storage used. Organizations need to consider pros and cons of the various data protection approaches when designing their storage systems.įirst, let's look at the challenges that come with RAID. However, RAID comes with its own set of issues, which the industry has worked to overcome by developing new techniques, including erasure coding.

RAID falls into two categories: Either a complete mirror image of the data is kept on a second drive or parity blocks are added to the data so that failed blocks can be recovered. It’s a way to spread data over a set of drives to prevent the loss of a drive causing permanent loss of data. RAID, or Redundant Array of Independent Disks, is a familiar concept to most IT professionals.